51 Most Used Machine Learning Tools by Experts

Machine Learning is a great technology to work with. It gets joyful when you learn to use it in the right way. Once you learn how to use the tools of ML, it becomes very convenient. You can play around with data, train your own models, create your own algorithms, and discover new methods. This can all be possible once you master the use of Machine Learning tools.

ML has a vast array of tools, software, and platforms. Also, the technology keeps on advancing forward. This means new technology always gets discovered. You have to choose to work on a particular Machine Learning tool to gain experience.

This article is about those lists of Machine Learning tools. You can refer to this article if you are having trouble choosing the best Machine Learning tools.

Machine Learning Tools

Now we will see the ML tools which most people use. We will also be looking at the tools which are rarely talked about. But still, those can be of great help. So, let’s begin:

1. Scikit-Learn

Scikit-Learn is an open-source package in ML. It also provides a unified platform for users. This platform helps in regression, classification, and clustering.

Dimensionality reduction and preprocessing are also done using Scikit-learn. It is built on top of three main libraries, NumPy, SciPy, and MatplotLib. This Machine Learning tool helps in training and testing your models as well.

2. Knime

Knime is an open-source Machine Learning tool and it is GUI based. It does not require prior coding knowledge. You can still perform operations using the facilities provided by Knime.

Knime is usually used for data-related operations. These include data mining, manipulation, etc. Knime processes data by creating various workflows and executing them. It has repositories that consist of many nodes. These nodes are then dragged into the Knime portal. Then a workflow of nodes is created and executed.

3. Tensorflow

Tensorflow is an open-source framework for numerical and large-scale ML. It uses both ML and neural network models.

Tensorflow is a Machine Learning tool that is python friendly. It runs on both CPU and GPU. Also, TensorFlow trains and run models of neural networks.

Image classification, NLP, etc. use these models.

4. Weka

Weka is an open-source software ML tool and its accessed through a GUI. It is a friendly software and used in teaching and research.

Weka has various industrial applications. Also, it provides access to various other tools in Machine Learning. They include Scikit-learn, R, etc.

5. Pytorch

Pytorch is a Deep Learning framework. It makes very strong use of GPU. This makes it very fast and flexible to use.

Pytorch is useful because it is used in very important aspects of ML. Like, tensor calculations and building deep neural networks. Pytorch is completely Python-based and is a great substitute for NumPy. It has a great future as it is still a young player in the industry.

6. Rapid Miner

Rapid Miner is a data science platform. It is an amazing interface and is quite helpful for non-programmers. This Machine Learning tool works on cross-platform operating systems. It is usually used in corporates and industries for quick testing of data and models.

The rapid miner interface provides a user-friendly platform. In this, you can put your own data and test your own model. You only have to drag and drop the item in the interface. That’s the reason many non-programmers use it.

7. Google Cloud AutoML

The basic concept of cloud autoML is to make AI accessible for everyone. It’s used for businesses as well.

Cloud AutoML provides pre-trained models for creating various services. These services include everything from speech, text recognition, etc.

Google Cloud AutoML at the moment is starting to become popular among companies. It is very difficult to spread AI in every field. This is because every sector doesn’t have skilled people in AI/ML.

So, Google has created the Cloud AutoML platform which provides pre-trained models. This is a great step by Google. The reason being, it helps users from all backgrounds to create and test their data.

8. Azure Machine Learning Studio

Azure Machine Learning Studio is the creation of Microsoft. Like Google, this is Microsoft’s version of providing ML services to users. It provides a drag and drop option to the users. This is a very useful and easy method to form connections of datasets and modules.

Azure also has the aim of providing AI facilities to all people. It works both on CPU and GPU. This Machine Learning tool is not as popular because of Google. But it is still a useful tool.

9. Accord.net

Accord.net is a computational framework of ML. It usually consists of audio and image packages. These packages help in training models and to create applications. These applications include computer vision, audition, etc.

Since it is .Net, the base of the library is c# language. It helps in classification, regression, etc. Accord has special libraries for audio and images. These are very helpful for testing and manipulating audio files etc.

10. Google Colab

Colab or Colab notebook is an environment that is now provided by Google. This environment is based on Jupyter Notebook. It is one of the most efficient platforms for ML in the market.

The only thing is everything in Colab will be cloud-based. You can work with many tools like TensorFlow, Pytorch, Keras on the Colab. Colab can improve your Python skills. We can also use a free GPU provided by Colab for extra processing. Google Drive is a storage method here.

11. Natural Language Analysis with Python NLTK

Natural Language Processing is about creating applications that understand human languages. Here, NLTK is a library of Python which helps in Natural Language Processing.

There are various packages in NLTK which are used for NLP. These include character count, word count, punctuations, etc which are components of language. Using this, the model can decipher the human language.

NLP is widely used as text recognition. NLTK actually means a natural language toolkit. Other than NLTK there various other tools as well, but NLTK is much more in use.

12. Jupyter Notebook

Jupyter notebook is one of the most used platforms/ Machine Learning tools in the industry. It is a very efficient and fast processing platform.

Jupyter supports three languages, which are Julia, Python, and R. Hence combining all the three we get the name Jupyter. Jupyter notebook is great for Python coding. You can use Jupyter to run your ML codes.

Google Colab’s environment is based on Jupyter. It allows us to store and share live code in the form of notebooks. We can also access it through various GUIs. These GUIs include anaconda navigator, winpython navigator, etc.

13. Glueviz

Glueviz is an interface that provides graphical and pictorial facilities to users.You can use your data and look at it in a graphical format. As well as, you can also create 2D and 3D images of your data for better analysis.

Glueviz is available as a web interface but you can also access it from anaconda. Anaconda Navigator is a GUI through which you can access Glueviz. Glue is the name of the library used. With glue, you can visualize internal linking within datasets. It is also used in creating graphs and histograms. Glue is a Python-based library.

14. Orange3

Orange3 is the latest version of Orange software. It is a data-mining software.

Orange helps in data visualization, preprocessing and other data-related stuff. We can use Orange via anaconda navigator. It helps in Python programming and can be a great UI.

15. Rstudio

Rstudio is an IDE used with language R. The Rstudio is different from R as it uses R to make programs. It is the best interface for the R language.

R is usually used for data analytics and data related functions. Rstudio makes R a very powerful tool to use. Anaconda provides access to Rstudio.

16. Vscode

Vscode or Visual Studio Code is a framework of Microsoft. It is closely integrated with the Azure ML framework.

Vscode is an enterprise used library. It is a node.js based library and is usually a source code editor.

17. IBM Watson

IBM provides us a web interface through which we can use Watson. Watson is a human interaction Q and A system based on NLP. Fields like information extraction, automated learning, etc. use Watson. It is usually used for testing and research purposes. But industries working on NLP make use of it.

The aim is to provide a human-like experience to the user.

18. Amazon Machine Learning

Amazon ML is a platform given by Amazon. It provides various services like sagemaker, redshift for Machine Learning applications.

Amazon is a vital player in the world when it comes to ML. It has one of the finest research programs in the world.

Amazon provides sagemaker which helps in developing and testing models. Also, the deepracer of Amazon which helps to understand reinforcement learning.

19. Open NN

Open NN is a software library that implements neural networks. It is usually written in C++ and focusses on Deep Learning research.

Open NN has some data mining functions. These are used while making predictive analysis. It helps you to create algorithms and to create your own neural network.

20. Theano

Theano is like TensorFlow. It helps in math-based calculations like vector and matrix evaluations.

Numpy is the main base of Theano. Theano works on both CPU and GPU.

Tensorflow runs on C++ and Python. But Theano is completely Python-based. It is fast and easy to execute on the system.

21. Torch 7

A torch is an ML library used for scientific purposes. It is usually used in Deep Learning. Lua is the base of the torch. It is no longer in active production, but, Pytorch is.

Torch 7 was the latest version before Pytorch came into the picture.

22. LIBSVM

LIBSVM is one of the two ML libraries used for various algorithms. It’s used in Sequential minimal optimization algorithm for kernel SVMs. This library supports methods like classification, regression, multiclass classification, cross-validation, etc.

LIBSVM runs on top of a C++ background.

23. LIBLINEAR

LIBLINEAR is the second algorithm other than LIBSVM. It uses the coordinate descent algorithm. Also, it helps in logistics regression and linear SVMs.

LIBLINEAR is often used in L-2 regularization algorithms.

24. MLLIB

MLLIB is a software of the Apache Spark. It is used in classification, regression, filtering, feature extraction etc. Spark MLLIB is the other name for it. It provides good efficiency and speed.

25. Pylearn2

Pylearn2 is an ML library that runs on top of Theano. So, it would have similar functions like that of Theano.

Pylearn2 can perform math calculations. It can run on top of both CPU and GPU. Before working on Pylearn2 you should know what Theano is. Pylearn2 aims to be a wrapper of libraries like Scikit-learn.

There are various future goals of pylearn2 which might get fulfilled if it stays in the market.

26. Vowpal Wabbit

Vowpal Wabbit is a project which aims at providing faster learning algorithms. Microsoft is the current sponsor after Yahoo.

Wabbit is a pretty fast and quick learning algorithm. It was earlier used for online learning methods and algorithms and now it is under research.

27. Caffe

Caffe is a Deep Learning library which is very much used in the industry today. It provides good expression, speed, and modularity.

Caffe was created in Berkeley university. C++ is the base language for Caffe. But, the interface provided is written in Python. It is usually used in image classification and segmentation.

Caffe supports GPU and CPU. Facebook has created caffe2 which also includes recurrent neural network features.

28. Mahout

Mahout is a Hadoop based platform. It is an open-source platform that is usually used for data mining and ML. Techniques like classification, regression, and clustering are possible with it.

Many major companies use mahout. These include Google, Twitter, Yahoo, etc. It uses some math based functions like vectors as well.

29. Pandas

Pandas is one of the most basic and easiest libraries to practice in ML. It is usually used for Python.

Pandas handle data manipulation and other data-related functions. It provides fast and efficient data structures. These data structures make structured and time-series data very easy to work with. Its goal is to become the most advanced data manipulation tool in the world.

30. Numpy

Numpy is a Machine Learning tool used in scientific calculations. More advanced libraries like Tensorflow and Theano run on NumPy.

For math-based calculations, this library comes in hand. It has an N-dimensional array, other sophisticated tools for data calculation.

Numpy is a Python-based tool. It is multi-dimensional storage for generic data. Hence, we use it in various databases as well.

31. MatPlotLib

Matplotlib is a graph plotting library used in Python. With this, you can draw histograms, bar graphs, pie graphs, etc. We have a similar library called Glue used in Glueviz. That is also used for plotting graphs.

The only difference is MatPlotLib is used for 2D drawing. Whereas glue can be used for 3D as well, also it can take images as input. It’s built on Numpy arrays. The advantage is that it can take a large amount of data.

32. DeepLearning4j

It is a Deep Learning library.

DeepLearning4j is usually written for Java and related languages. It is closely connected with Hadoop and apache spark.

The aim of this Machine Learning tool is to make AI available for businesses. It can work on both CPU and GPU.

33. MLPY

MLPY is an ML package used in Python. It’s built on NumPy/scipy libraries.

MLPY provides robust, efficient methods to handle supervised and unsupervised problems. It is a multiplatform library as it works on both Python 2 and 3.

MLPY has features like regression, classification, dimensionality reduction, etc.

34. H2O

It is a very open-source ML platform.

H2O supports statistical ML algorithms. It also has some AutoML capabilities. It’s written in Java, but it provides interfaces in various languages.

H2O has various packages like sparkling water, h204gpu, etc. The latest version is H2O-3. It is closely integrated with frameworks like Hadoop.

35. Winpython

Winpython is an open-source distribution of Python language. It is like anaconda but it does not have a GUI to interact with.

Like Anaconda, it provides access to Jupyter notebook and spyder. Unlike anaconda, Winpython is lightweight. It exposes more of the Python core model than anaconda.

Also, it doesn’t take much time to install. Whereas anaconda takes a lot of time install.

36. Spyder

Spyder is an IDE based on Python language used for scientific stuff. It uses libraries like pandas, scipy, numpy, cython for scientific calculations.

Spyder also has a web interface. You can use it via anaconda and also Winpython. It is different from Jupyter as Jupyter has better means of sharing data.

Also, Jupyter is much better for writing small scale programs. Spyder is the better one when it comes to scientific methods.

37. Cuda-Convnet

Cuda-convnet is a C++ based package. The word Cuda-convnet means CUDA implementation of the Convolutional Neural Network. It is strongly supported by GPUs.

The new update has the name Cuda-convnet2. Its main features are faster working on GPUs and training on multiple GPUs.

38. Mallet

Mallet is a Java-based package used in NLP and other information extraction jobs. The full form of Mallet is Machine Learning for language toolkit.

Mallet provides you a tool for document classification. Also, mallet provides tools for entity extraction from texts. This means you can also extract specific datasets of your choice.

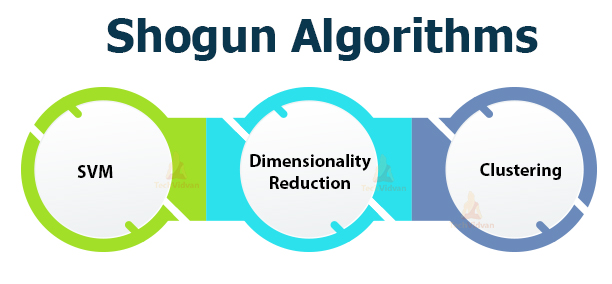

39. Shogun

Shogun is an open-source library based on C++. It provides an interface for languages like octave, Python, R, etc.

Shogun supports various algorithms. These include:

- SVM

- Dimensionality reduction algorithms

- Clustering Algorithms like k-means, k-NN, etc.

40. Keras

Keras is a great ML tool if you are a beginner. It is an advanced neural network API.

Keras runs on top of Theano, TensorFlow, CNTK. It can create both CNN and RNN or their combination. The library is very much user-friendly and easy to use. Its design is an API especially for humans rather than machines.

Keras is one of the widely used Machine Learning tools for beginners. It is also one of the best Machine Learning tools out there.

41. Pattern

The pattern is a data and web mining module of Machine Learning. It is used in NLP, text data mining, text analysis, etc.

The pattern is installed via pip in Python. It helps in making vector space models, SVM, etc.

Pattern3 is the latest version of Pattern we have. Pattern works for Python versions above 2.5. But it is still not supported by Python3.

42. Gensim

Gensim is a Python-based library and is usually used in NLP. This Machine Learning tool also has applications in information retrieval or extraction. It runs on numpy and scipy libraries. Hence it can perform the math and scientific tasks as well.

A large amount of data can get processed. It is used in modelling of vector spaces.

43. SciPy

Scipy is a Python-based library in Python. It has the functionalities of Numpy in it as well.

Scipy can perform scientific and higher-order math operations. It can perform linear algebra calculations, integration, etc.

Numpy arrays are the ones used for calculations. The reason we prefer scipy over others is the speed. It can perform these operations at high speed at much ease.

44. Dask

Dask is the library in Python used for parallel computing. It has two main components:

- Dynamic task scheduling

- Big data collections

Dask allows multi-dimensional data analysis activities. It is fast, flexible and reliable when simultaneous processes occur.

45. Numba

Numba is a Python-based tool. It is also integrated with NumPy tools.

Numba is usually used for accelerating the processing of Python apps. This means Numba can speed up processes in Python applications. It uses the LLVM compiler to convert Python code into machine-level code.

This helps it achieve greater speeds.

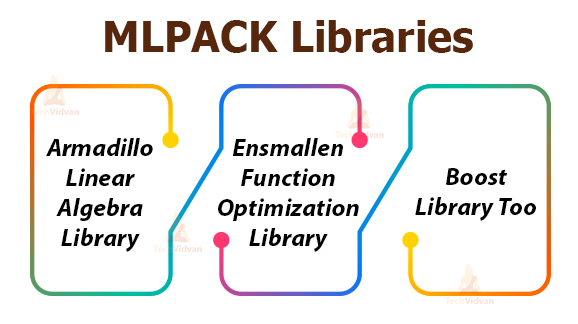

46. MLPACK

MLPACK is a library of Machine Learning which is written in C++. It aims at providing faster and flexible implementations. MLPACK runs on three libraries:

- Armadillo linear algebra library

- Ensmallen function optimization library

- Boost library too

MLPACK is usually used by scientists and engineers. This library supports RNN as well.

47. DLIB

DLIB is a modern ML toolkit based on C++. It’s usually used in tackling real-world problems.

DLIB helps to code in robotics, embedded systems, phones, etc. It is used in various ML algorithms, image processing, GUI and network designing.

48. Ramp

A ramp is a ML toolkit used for rapid prototyping. It is a Python module.

Ramp is easily extensible. This means you can use packages from other tools like Scikit-learn.

Also, Ramp can store and retrieve data from the disk. The stored data is in an HDF5 format.

49. CUV

CUV is a C++ based library. It consists of basic matrix operations and convolutions.

CUV uses both CPU and GPU. It also consists of Python bindings in it. Hence it’s used in faster prototyping.

CUV makes use of NVIDIA CUDA easy and smooth.

50. Lasagne

The lasagne library was built for Theano. It supports and trains neural networks in Theano.

Lasagne supports networks like CNN, RNN, and LSTM. The architecture of lasagne allows many I/O. Like Theano, lasagne also supports CPU and GPU.

Lasagne is still under research and is constantly updating. Its main principles are simplicity, no abstraction, etc.

51. Open CV

Open CV is a library which helps in computer vision. It runs on NumPy and many other tools. CV means computer vision. It is one of the most used tools in the world.

Summary

This article was about the top 51 Machine Learning tools. So, we have seen and discussed all the Machine Learning tools in use. These tools are still in use in the industry and use different languages like some run on C++, some on Python and some on Java. This shows us how vast ML has become. It has the same tools with different languages.

All these Machine Learning tools mentioned above are very important. So, if you are into ML, please practice these Machine Learning tools using coding.