Reasons to Learn Hadoop – Importance of Big Data Hadoop

Before learning any technology, we should know the reason for learning it. We must know what are the benefits of learning that technology. In this article, we will see the reasons to learn Hadoop.

The article enlists the top 11 reasons that encourage why you should learn Hadoop. You will also explore the reasons that answer your question: why should you master Hadoop? What is the importance of Hadoop?

So, let us explore why Hadoop is important.

Top 11 Reasons to Learn Hadoop

In today’s world, the term Big Data is a buzz. Nowadays, almost all companies are collecting data posted online. The data on websites like Instagram, Facebook, emails, e-commerce sites, Twitter, etc comprises Big Data.

Companies are looking for a cost-effective, reliable, and innovative solution for storing and analyzing Big Data. Hadoop is the best tool that fulfills all the Big Data demands.

The major reasons to learn Hadoop are:

1. Hadoop as a Gateway to Big Data Technologies

Apache Hadoop is a cost-effective and reliable tool for Big Data analytics. It has been adopted by many companies. It is not a single word but is a complete ecosystem.

Apache Hadoop has a very large ecosystem that serves a wide range of organizations. Every organization from web start-ups to fully powered organizations required Hadoop for answering their business needs.

Hadoop ecosystem comprises many components like HBase, Hive, Zookeeper, MapReduce, etc. These Hadoop ecosystem components cater to a broad spectrum of applications.

No matter how many new technologies may come or go, Apache Hadoop will remain the backbone for the Big Data world. It is a gateway to all Big data technologies.

So one needs to learn Hadoop to boost his/her career in the Big Data world and master other big data technologies that fall under the Hadoop ecosystem.

2. Hadoop as a Disruptive Technology

Hadoop is an excellent alternative to traditional data warehousing systems in terms of reliability, scalability, cost, performance, and storage. It has revolutionized data processing and brought a drastic change in data analytics.

Also, the Hadoop ecosystem is going through continuous enhancements and experimentation. Big Data and Apache Hadoop is taking out the world by storm and if we do not want to get affected, then we have to ride with the tide.

3. Move to Big Company

No company is left where Big Data has not expanded its roots. Big Data has covered all the domains such as banking, retail, healthcare, government, media, natural resources, transportation, and so on.

Companies are increasingly making use of big data. This means industries are realizing the power of Big Data.Hadoop is a software framework that can harness the power of big data to improve the business.

Companies around the globe are trying to access the information from various sources which helps them to attract their audience, gain profits, increase revenue, and expand their business. Companies like Walmart, Facebook, New York Times, etc all are using Hadoop and thus demand Hadoop experts.

So learning Hadoop and becoming a Hadoop expert provides you the way to land your dream job.

4. Managing Big Data

We are living in a world wherein every second we generate huge volumes of data. There arises a need to manage this vast amount of data called big data.

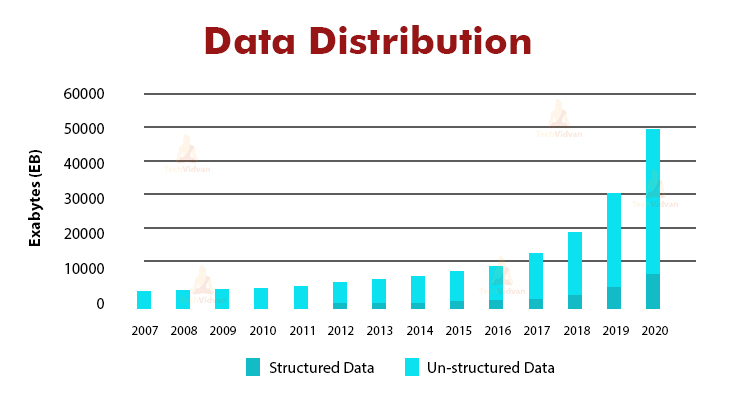

From the above chart, we can see that the unstructured data is increasing exponentially. For managing such huge data, we need Big Data technologies like Hadoop. According to the Google report, since the dawn of civilization till 2003 mankind had generated 5 exabytes of data.

But now we are producing 5 exabytes of data every two days. Thus, there is an increasing need for a cost-effective and reliable solution that handles Big Data. Apache Hadoop proves to be the best rescue in such a situation.

Hadoop with its economical feature and robust architecture is the best fit for storing and processing Big data.

5. Exponential Growth of Big Data Market

As per the Forbes report, “Hadoop Market is expected to reach $99.31B by 2022 at a CAGR of 42.1%”. Over the last few years, Big Data is a big game-changer in most of the industries. The huge number of companies belonging from various domains have adopted Big Data.

Companies, by using Big Data tools like Apache Hadoop, Apache Spark, examine large data sets for identifying hidden patterns to discover unknown correlations, customer preferences, market trends, and other useful business information.

In India, the big data analytics sector will grow eightfold. As per the NASSCOM, big data analytics will reach USD 16 billion by 2025 from USD 2 billion.

With the rising Big data market, the need for Big Data technologies also rises. Apache Hadoop is the base of many big data technologies. Many Big data technologies like Hive, Hbase are built on the top of Hadoop.

The trends of Hadoop and Big Data are tightly coupled with each other. So, Big Data and Hadoop are having a promising future ahead and will not be going to vanish at least in the next 20 years.

6. Increasing demands for Hadoop Professionals

Apache Hadoop is one of the most promising technologies that can handle rising Big data. Hadoop is an economical, reliable, and scalable solution to all the big data problems.

With the increase in Big Data sources & the amount of data, Hadoop has become the most prominent Big Data technologies. This rising attributes demands for Hadoop professionals.

Big Companies like Walmart, Facebook, LinkedIn, eBay, etc are looking for Hadoop professionals. Thus there are rising needs of Hadoop professionals around the globe. The number of Hadoop jobs is increasing rapidly. So learning Hadoop gives you a tremendous opportunity to grab jobs across the globe.

7. Scarcity of Big Data Hadoop Professionals

As we have seen above how Hadoop job opportunities are increasing at high speed. But people fail in grabbing those opportunities. Almost most of these job roles are still vacant.

This is mainly due to a large skill gap persisting in the IT market. The lack of proper skill set for Big Data and Hadoop Technology leads to a vast gap between the supply and the demand chain.

Thus, this is the right time to step ahead and build a bright career in the Big Data and Hadoop market. This can be done by mastering Hadoop before it’s too late.

So, become a Hadoop expert and land your dream job. Follow the DataFlair Hadoop tutorial guide and start your journey for building a bright career.

8. Hadoop – a maturing technology

Hadoop is evolving with time and Hadoop 3.0 has now come into the market. It has collaborated with HortonWorks, MapR, Tableau, and even BI experts. New actors such as Apache Spark, Flink are also coming on the Big Data market.

These technologies promise a faster speed of processing. They provide a single platform for different kinds of workloads. Hadoop is compatible with all these new players. Hadoop provides reliable and robust data storage over which these technologies can be deployed.

The introduction of Apache Spark has enhanced the Hadoop ecosystem. Apache Spark has enriched the data processing capability of Apache Hadoop. The Apache Spark was designed to work with HDFS. Even if we are working on Hadoop 1.x then also we can take advantage of Spark’s capabilities.

Rising technology Flink is also compatible with Hadoop. We can use all of the MapReduce APIs in Flink without any change in a line of code. We can use Hadoop functions within the Flink program. The other Flink functions can be mixed with Hadoop functions.

9. Learn Hadoop for Fat Paycheck

One of the reasons for learning Hadoop is the fat paycheck. Due to the lack of Hadoop professionals, companies offer high salaries to Hadoop professionals. As per IBM, in the US, the demand for data professionals will reach 364000 by 2020.

As per the payscale.com, the salary offered to the Hadoop professionals varies from $93K to $127K annually, based on job roles. This is 95% higher than the average salaries offered to the other job postings.

10. Caters different Professional Backgrounds

Hadoop Ecosystem consists of various tools that can be used by the professionals belonging to different backgrounds. If one is from a programming background, then he/she can write a MapReduce program in any language of its choice such as Java, Python, etc.

If one is exposed to the scripting language, then Apache Pig is the best fit for him. Alternatively, if one is comfortable with SQL then Apache Hive or Apache Drill is the best fit for them.

The Big data analytics market is growing all around the world. This strong growth pattern creates opportunities for all IT Professionals.

It is best for:

- Senior IT Professionals

- Software Developers, Project Managers

- DBAs and DB professionals

- Software Architects

- Mainframe professionals

- Analytics & Business Intelligence Professionals

- ETL and Data Warehousing Professionals

- Graduates who are looking to build their career in the Big Data Field.

- Testing professionals

11. Hadoop has a Better Career Scope

The Hadoop ecosystem consists of various components providing batch processing, real-time stream processing, machine language, and many more. Learning Hadoop opens the doors for various job profiles like:

- Big Data Architect

- Hadoop Developer

- Data Scientist

- Hadoop Administrator

- Data Analyst

Hadoop offers a vast number of opportunities for both the freshers as well as experts. People who are already working in the IT industry as ETL, mainframe professional, architect, etc. have an edge over freshers.

Summary

I hope now you are eager to learn Hadoop. Learning Hadoop provides you a tremendous opportunity for boosting your career. It is a never vanishing technology and irreplaceable.

No technology even after 20 years will replace Hadoop. With the rising Big data market, the need for Hadoop professionals also rises. What if you are the one for whom the company seeks for? You can land your dream job in the world’s top companies just by learning Hadoop.