Introduction to Apache Spark SQL Datasets

Spark datasets is a distributed collection of data. It is a new interface, provides benefits of RDDs with Spark SQL’s optimized execution engine. In this blog, we will learn the concept of Spark SQL dataSets.

We will also focus on why datasets is needful, and what is the significance of encoder in the datasets? Moreover, we will cover the features of the SQL DataSets in Apache Spark.

Also, understand how to create a SQL datasets in this spark tutorial.

What is Spark SQL DataSet?

It is an interface, provides the advantages of RDDs with the comfort of Spark SQL’s execution engine. It is a distributed collection of data. We can also create Spark datasets from JVM objects. By using functional transformations (map, flatMap, filter, etc.), it can be manipulated.

Datasets API is only available in Scala and Java. R and Python do not have the support of the datasets API as Python is very dynamic in nature, it provides many of the benefits of the datasets API, such as we can access the field of a row by name naturally row.columnName.

In addition, it is a strongly-typed immutable collection of objects, these are mapped to a relational schema. There is a new concept called an encoder, at the core of the datasets API. However, an encoder is responsible for converting between JVM objects and tabular representation.

By using Spark’s tungsten binary format, all the tabular representation is stored. It allows operations on serialized data and also improves memory utilization. Datasets API is quite familiar, it provides many of the same functional transformations such as map, flatMap, filter.

The encoder is a primary concept in serialization and deserialization framework. In Spark, there are many built-in encoders, which are very advance. Encoders generate bytecode to interact with off-heap data. Without a de-serializing entire object, the encoder provides on-demand access to individual attributes.

It is structured as well as lazy query expression which triggers on the action, datasets represent logical plan internally. This plan tells the computational query, which we need to produce the data. That plan is also a base catalyst query plan for the logical operator to form a logical query plan.

Afterwards, when we analyze this and resolve we can form a physical query plan. Most importantly, datasets reduce the memory usage.

Why SQL DataSets in Spark?

As we know, there were some drawbacks with RDD as well as Spark dataframes, to overcome those limitations datasets introduced. As in dataFrame, data cannot be manipulated without knowing its structure.

Since there was no clause for compile-time type safety. While In RDD there was no automatic optimization. Thus, whenever required we need to do it manually, to overcome this issues we needed dataset.

Datasets inherit all the features of RDD and dataframe, such as:

- RDD’s convenience.

- Dataframe’s performance optimization.

- Scala’s static type-safety.

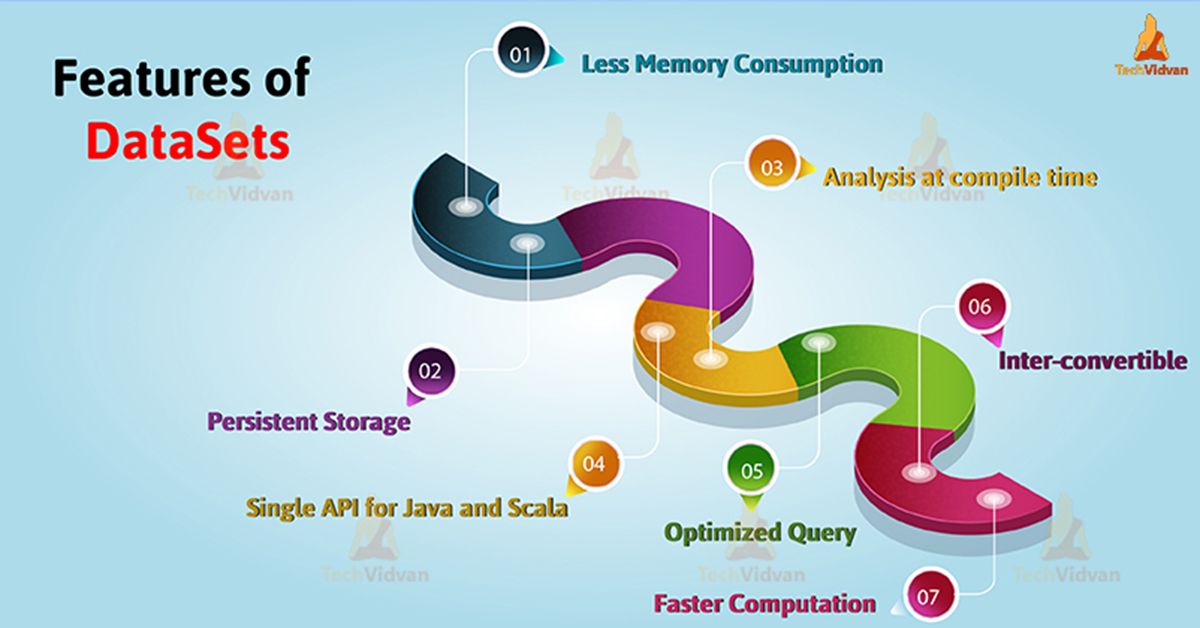

Features of SQL DataSets

There are so many features of dataset, such as-

1. Inter-convertible

It is possible to convert the type-safe dataset to an “untyped” dataframe. There are 3 methods, which datasets holder provides for a conversion from Seq[T] or RDD[T] types to Dataset[T]:

- toDS(): Dataset[T]

- toDF(): dataframe

- toDF(colNames: String*): dataframe

2. Persistent Storage

As we know that datasets in Spark are both serializable and queryable. Therefore, it is possible to save it to persistent storage.

3. Analysis at compile time

We can check syntax and analysis at compile time by using datasets. This is also one of the limitations of RDD or data frame. Hence, It is not possible using dataframe, RDDs or regular SQL queries.

4. Optimized Query

By using catalyst query optimizer and tungsten, it provides an optimized query. Tungsten improves the execution by optimizing the Spark job.

5. Single API for Java and Scala

There is a single interface for Java and Scala, in datasets. Using single interface also reduces the burden of libraries. Since libraries have no longer to deal with two different type of inputs. Due to this unification, we can use Scala interface, code examples from both languages.

6. Faster Computation

We can implement dataset faster than the RDD implementation, that results in good performance of the system.

7. Less Memory Consumption

It creates a more optimal layout while caching, hence dataset reduces the memory consumption.

How to create SQL DataSets in Spark

There are multiple ways through which we can create a dataset. like:

1. SparkSession

To Spark SQL, spark session is the entry point. While developing SQL applications using datasets, it is the first object we have to create. In Spark 2.0, spark session has merged SQL context and Hivecontext in one object.

To create an instance of Spark session, we use the SparkSession.builder method.

SparkSession.builder

By using stop method, we can stop the current SparkSession

spark.stop

2. QueryExecution

By using QueryExecution, we represent structured query execution pipeline of the datasets. We need to use QueryExecution attribute to access QueryExecution of a dataset. While we execute, a logical plan in Spark session we get QueryExecution.

executePlan(plan: LogicalPlan): QueryExecution

To produce a QueryExecution in the current SparkSession, execute plan executes the input logical plan.

3. Encoder

Encoders translate between Spark’s internal binary format and JVM objects. Moreover, by using encoder, we can serialize the object. It serializes objects for processing or transmitting over the network encoders.

Conclusion

As a result, we have seen that datasets are strongly typed data structure in Spark. Basically, datasets represent structured queries. In the datasets, we get the functionality of both RDD as well as dataframes. By using datasets, we can generate the optimized query.

As we discussed above, that datasets lessen the memory consumption, that increases the performance of the system. Also provides a single API for both Java and Scala. Hence, datasets boost up the efficiency of working of the system.