Apache Spark Executor – For Executing Tasks

There are some distributed agents in spark, which are responsible for executing tasks, those distributed agents are Spark Executor. In this blog, we will learn the whole concept of Apache spark executor.

Moreover, we will learn how we can create executor instance in spark, launch task method in spark executor and how we can stop spark executor and request for the report.

What Is Spark Executor

There are some distributed agents in spark, which are responsible for executing tasks. Those distributed agents are executors. There are some conditions in which we create executor, such as:

- When CoarseGrainedExecutorBackend receives RegisteredExecutor message. Only for Spark Standalone and YARN.

- While Mesos’s MesosExecutorBackend registered on spark.

- When LocalEndpoint is created for local mode.

It runs for the complete lifespan of a spark application, i.e. static allocation of an executor. Although, we can also prefer for dynamic allocation.

In other words, for an application, a process launched on a worker node. Moreover, it runs tasks and also keeps data in disk storage or in memory among them. Basically, each application has its own executor.

In addition, it reports partial metrics for active tasks to the receiver on the driver. It offers in-memory storage for RDDs. Those are cached in spark applications by block manager. While an executor initiates, it registers with the driver. Moreover, it directly communicates to execute tasks.

By executor id and the host on which an executor runs executor offers are described. It can run multiple tasks over its lifetime, both sequentially and parallelly. Also, tracks running tasks by their task ids in runningTasks internal registry. For launching tasks, executors use an executor task launch worker thread pool.

Moreover, it sends metrics and heartbeats by using Heartbeat Sender Thread. It is possible to have as many spark executors as data nodes, also can have as many cores as you can get from the cluster mode. We can describe executors by their id, hostname, environment (as SparkEnv), and classpath.

Note: Executor backends exclusively manage executors.

Creating Spark Executor Instance

We can create executor with the help of following:

- Executor ID.

- To access the local MetricsSystem and block manager we use SparkEnv. We can also use it to access the local serializer.

- Executor’s hostname.

- To add to tasks’ classpath, a collection of user-defined JARs. By default, it is empty.

- Flag whether it runs in local or cluster mode (disabled by default, i.e. cluster is preferred).

While created, the following INFO messages appear in the logs:

INFO Executor: Starting executor ID [executorId] on host [executorHostname]

Launching Task — launch Task Method

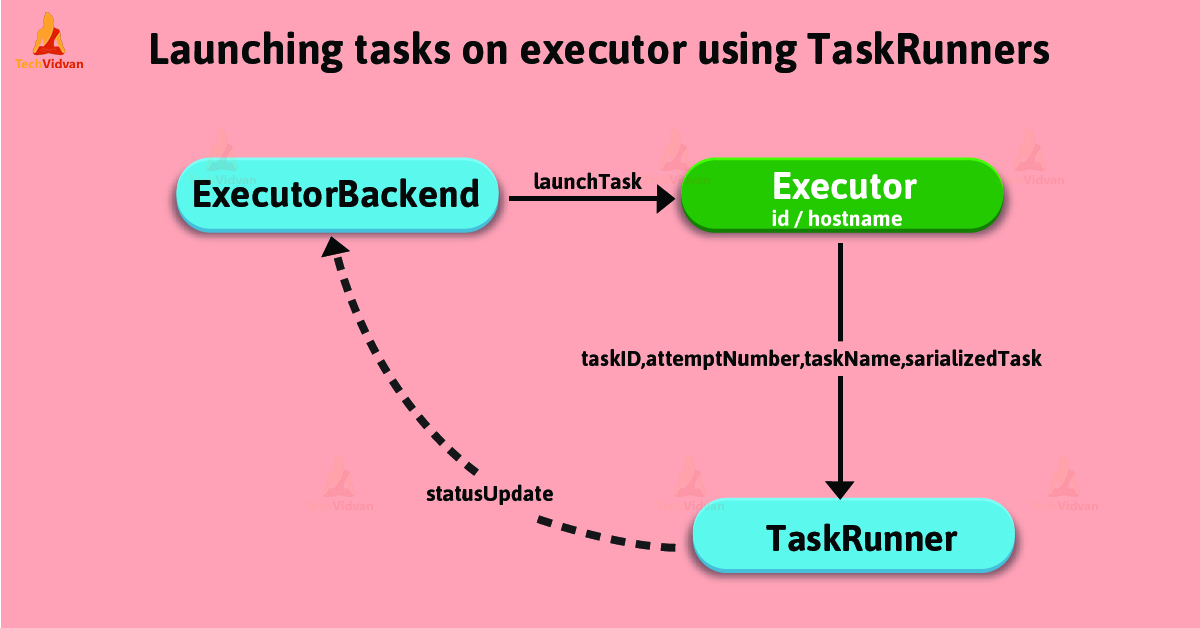

This method executes the input serializedTask task concurrently.

launchTask( context: ExecutorBackend, taskId: Long, attemptNumber: Int, taskName: String, serializedTask: ByteBuffer): Unit

LaunchTask creates a TaskRunner, internally. Afterwards, registers it in runningTasks internal registry with the help of taskId. Ultimately, executes it on “executor task launch worker” thread pool.

“Executor task launch worker” Thread Pool — ThreadPool Property

To launch, It uses threadPool daemon cached thread pool by task launch worker id. Generally, when the executor is created, threadPool is created and shut down when it stops.

Stopping Executor — Stop Method

This method stops requests MetricsSystem for a report.

stop(): Unit

Stop method uses SparkEnv to access the current MetricsSystem. The method stop() shuts driver-heartbeater thread down then waits at most 10 seconds. It shuts executor task launch worker thread pool down. Although, when not local, it requests SparkEnv to stop.

Note: We use stop() while CoarseGrainedExecutorBackend and LocalEndpoint stop their managed executors.

Conclusion

In this blog, we have learned, the whole concept of Spark executors. Also, we have seen how executors are helpful for executing tasks. Moreover, we can have as many executors we want. Hence, it enhances the performance of the system.