What is Big Data and Hadoop – Raise the Bar & be a Star

Curious to know what is Big data and hadoop and how Hadoop handles the Big Data problem?

In this article, you will study how Apache Hadoop solves the Big Data problem. In this article, firstly we will see introduction to big data and Hadoop. The article then explains Apache Hadoop and its core components.

Moving forward you will explore how Hadoop solves the big data problem.

Let’s start with an introduction to Big Data.

What is Big Data?

Big Data refers to the giant data. It is the massive data that we can not handle with conventional database processing techniques. It is the larger, complex data set that can not be stored in RDBMS.

Big Data is generally petabyte in size. It is of varying formats like structured (tables in RDBMS), semi-structured (XML documents), or unstructured (audios, videos, images).

Every second we are generating approximately 1.7 megabytes of Data. However, only 0.5% of the data is analyzed at present.

It is really very difficult for organizations to store and manage Big Data before the development of Apache Hadoop.

What is Hadoop?

Hadoop is a software framework designed by Apache Software Foundation. Hadoop framework provides solutions to all the Big Data problems. It is designed for storing and processing vast amounts of data (known as Big Data).

Hadoop stores and processes the data across clusters of inexpensive machines. The Hadoop clusters consist of nodes connected with a network. Hadoop clusters can store data for sizes ranging from terabytes to petabytes. It can store and process structured, semi-structured, as well as unstructured data.

It is an open-source framework and is highly cost-effective. Hadoop clusters can process petabytes of data within minutes.

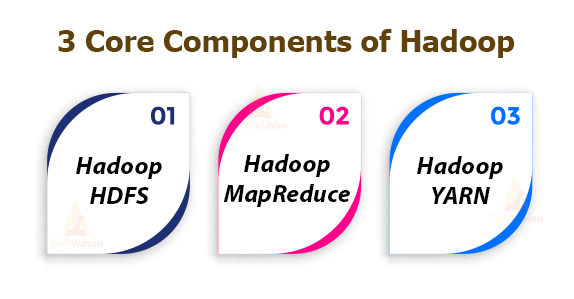

Hadoop comprises three core components. They are:

1. Hadoop HDFS: Hadoop Distributed file system (HDFS) is the storage layer in Apache Hadoop. Hadoop HDFS stores data across multiple nodes. It divides data into blocks and stores them on multiple machines. DataNode and NameNode are the two Hadoop HDFS daemons that run on a Hadoop cluster.

2. Hadoop MapReduce: Hadoop MapReduce is the heart of the Hadoop framework. It is the processing layer in Hadoop. It provides a software framework for writing applications that process vast amounts of data. Hadoop MapReduce processes data in a distributed manner in a Hadoop cluster.

3. Hadoop YARN: Yet Another Resource Negotiator also known as YARN is the resource management layer of Hadoop. It is responsible for managing resources amongst applications running in the Hadoop cluster.

How Hadoop Solves the Big Data Problem

1. Hadoop is designed to run on a cluster of machines

Hadoop stores and processes data in a distributed manner. The Hadoop cluster comprises a number of commodity hardware connected with a network. So we can scale the storage easily just by adding hardware machines without any downtime.

2. Hadoop clusters scale horizontally

We can easily scale Hadoop storage and computing power both horizontally as well as vertically. Hadoop allows for horizontal scalability that is adding more nodes to the cluster without any downtime. It eliminates the need for buying expensive hardware.

3. Hadoop can handle unstructured data

Hadoop can store and process data of any format. It can handle both arbitrary texts as well as binary data. So it easily handles the unstructured data.

4. Hadoop clusters provide storage and computing

Hadoop provides storage as well as processing all in one place.

We don’t need different storing and processing tools for dealing with big data.

5.Hadoop provides storage at reasonable cost

Hadoop clusters consist of commodity hardware thus it is highly cost-effective. We don’t need high-end machines for storing big data.

6. Hadoop allows for the capture of new or more data

With Hadoop, organizations can capture data of any type and size. For example, companies can capture website click logs, etc.

7. With Hadoop, you can store data longer

In order to manage the volume of data stored, businesses periodically remove older data. They generally store logs for the last three months and purged the older logs.

Hadoop enables them to store historical data for a long time thus allowing new analytics to be performed on older historical data.

8. Hadoop provides scalable analytics

Hadoop provides distributed processing which means we can process huge volumes of data in parallel. MapReduce is the compute framework of Hadoop and can be scaled to process petabytes of data.

Hadoop for Big Data

Before Hadoop development, it’s a challenge for organizations to store and analyze unstructured data.

Hadoop makes the storage and analysis of Big data easy due to its core components such as HDFS, YARN, and MapReduce.

Hadoop’s unique features make it attractive for storing and processing big data. One of the main features of Hadoop is its ability to accept and manage data in its raw form.

Hadoop allows organizations to store and process data of any formats. We use it for Big data solutions because Hadoop tools are very efficient at collecting and processing a large pool of data.

Hadoop framework is highly cost-effective which makes one strong reason for companies to adopt Hadoop. It has been found that the data storage that could cost up to $50,000 now cost only a few thousand with Hadoop HDFS.

The flexibility of the Hadoop framework is another reason that makes Hadoop the go-to option for Big data storage, and analysis.

Hadoop clusters can store and process any desired form of data. It provides organizations a room to collect and analyze data to generate maximum insights as regards market trends and customer behaviors.

Hadoop enables access to historical data which is the reason for Hadoop’s popularity.

Hadoop provides full insights because of the longevity of data storage. We can store data for the longest period in Hadoop and perform analysis on stored data when necessary. It provides historical data that is critical to big data.

MapReduce is the data processing component of Hadoop and is compatible with other tools. MapReduce can support programming languages such as Java, Ruby, Python, Perl, etc..

Challenges of using Hadoop

- MapReduce programming is not efficient for interactive and iterative analytics tasks. Because nodes do not intercommunicate except through sort and shuffle. Iterative algorithms require multiple shuffle and sort which creates multiple files and thus inefficient. MapReduce is the best match for batch processing jobs.

- It is very difficult to find entry-level programmers having Java skills to write MapReduce programs. But this difficulty can be overcome by using Hive which is built on top of Hadoop which uses HQL which is similar to SQL.

- Security is also a challenge while using Hadoop. The Kerberos authentication protocol is a great move for making the Hadoop environment secure.

- Hadoop doesn’t have full-feature, easy-to-use tools for data management, data cleansing. Lack of tools for standardization and data quality.

Summary:

Finally at the end, we can say that the Apache Hadoop is a reliable, cost-effective framework for storing and processing Big Data. It is a solution for all Big Data problems. Due to its unique features, companies are adopting Hadoop to deal with big data and gain business insights.

With Hadoop, we can store Big Data for a longer time, perform analysis on historical data as well. It enables organizations to store and process Big Data in a distributed manner.